I recently got a request from Ali Kourtiche asking how I would go about finding term frequency and the such from Facebook posts. Ali had a CSV file which he had created using an application called Facepager.

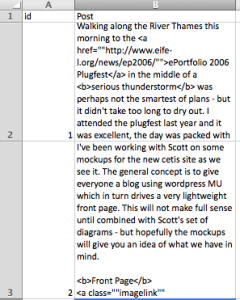

While Facepager looks interesting I haven’t played with it yet so I don’t know what the data structure is like. So I will assume that Ali has a CSV and that he can get his data in to two columns, an id column and a column with the content of each post. I’ve got 31 posts from a blog that I have access to. In Excel it looks something like this:

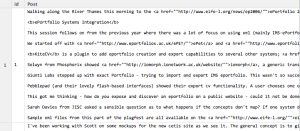

To play with text and find things like term frequency I use tools called R and Rstudio. I won’t go through the installation but once you have them up and running open up Rstudio. We will also need an R package called tm. To install this look for the packages tab in Rstudio, click Install Packages and type “tm” in the dialog box that opens up and click install.

Now we are going to get the posts in to Rstudio and get the term frequency.

First create a new R script in Rstudio by clicking the little plus button in the top right corner and selecting “R Script”. In the script that we’ve created we want to first enabled the tm library. To do this add the following line to the top of your script:

“library(tm)”

Now we want to import the CSV in to the environment. I saved mine on a Mac OS X desktop. To load it in to the environment we use the following line:

posts<- read.csv(“/Users/David/Desktop/posts.csv”, header = TRUE)

This will read the CSV and put it in to a datastructure in R. The header = true should be set in my example because the first row contains the column names. To run both these commands that you have put in your script press the ‘source’ button. You should now have a dataframe that you can explore in R. Under the global environments tab you should see ‘posts’, clicking the little table next to it should show you your csv:

Before we do anything we want to create a corpus out of this dataframe, we can also prepare the text so that it is easier to analyse. When I prepare the text I useually make it all lower case and remove punctuation numbers and remove common words. To do this put the following code at the bottom of your current script. The tm package has a list of stopwords to remove, I’m using the English list, but you can change this as you wish:

corpus <- Corpus(VectorSource(tw.df$text)) # create corpus object

corpus <- tm_map(a, tolower) # convert all text to lower case

corpus <- tm_map(a, removePunctuation)

corpus <- tm_map(a, removeNumbers)

corpus <- tm_map(a, removeWords, stopwords(“english”))

There was a bug on Mac OS X to do with the number of cores my processor has, if you get this error then try this instead:

corpus <- Corpus(VectorSource(posts$Post)) # create corpus object

corpus <- tm_map(corpus, tolower, mc.cores=1) # convert all text to lower case

corpus <- tm_map(corpus, mc.cores=1, removePunctuation)

corpus <- tm_map(corpus, removeNumbers, mc.cores=1)

corpus <- tm_map(corpus, removeWords, stopwords(“english”), mc.cores=1)

Now we can find play with the data to find a few things that may be of interest.

1. We can create a term document matrix to find how often each term is found in each document using the following:

tdm <- TermDocumentMatrix(corpus)

Or, if you want to weigh the DocumentTermMatrix

dtm <- DocumentTermMatrix(crude, control = list(weighting = weightTfIdf))

2. Ali wanted a list of all the words and how much they were used. I created a dataframe from the matrix, added up the total times the words were used, then reordered the dataframe so that we could see the list:

count<- as.data.frame(inspect(tdm))

count$word = rownames(count)

colnames(count) <- c(“count”,”word” )

count<-count[order(count$count, decreasing=TRUE), ]

You can then press the little table next to count to see the word counts:

There are lots you can do with a document term matrix/table of wordcounts and I’ll leave it for Ali to do some googling for ideas.

The finished R Script

library("tm")

library("SnowballC")

posts<- read.csv("/Users/David/Desktop/posts.csv", header = TRUE, fileEncoding="latin1")

corpus <- Corpus(VectorSource(posts$Post)) # create corpus object

corpus <- tm_map(corpus, tolower, mc.cores=1) # convert all text to lower case

corpus <- tm_map(corpus, mc.cores=1, removePunctuation)

corpus <- tm_map(corpus, removeNumbers, mc.cores=1)

corpus <- tm_map(corpus, removeWords, stopwords("english"), mc.cores=1)

tdm <- TermDocumentMatrix(corpus)

#tdm <- TermDocumentMatrix(corpus, control = list(weighting = weightTfIdf))

mydata.df <- as.data.frame(inspect(tdm))

count<- as.data.frame(rowSums(mydata.df))

count$word = rownames(count)

colnames(count) <- c("count","word" )

count<-count[order(count$count, decreasing=TRUE), ]

8 Comments

Ali Kourtiche · January 7, 2014 at 6:23 pm

hello , i use tf-idf and i want to see , the world and his corespendente tf-idf in corpus (juste first 10 words )???

dtm <- DocumentTermMatrix(crude, control = list(weighting = weightTfIdf))

findFreqTerms(tdm, 2, 3)???

David Sherlock · January 7, 2014 at 7:49 pm

mydata.df <- as.data.frame(inspect(dtm))

count<- as.data.frame(colSums(mydata.df))

count$word = rownames(count)

colnames(count) <- c("count","word" )

count<-count[order(count$count, decreasing=TRUE), ]

Dorvak · January 21, 2014 at 12:22 pm

Hi, I am the creator and one of the two developers of the Facepager.

The output of the CSV-Files may be customized, so that you can control the columns. While some columns are exported by default (f.e. the ID of a Facebook-Post or tweet and the time of the query), custom colums may be created by the user (even information which is nested inside the JSON-Response of an API, f.e. the column “entities.hashtags.2.text” will provide the third hashtag of a tweet etc.)

Greetings,

Till Keyling

sandeep · February 15, 2014 at 5:47 pm

Hello, I am new to R.

So i am tm Package and i need to get the count of only few specific words so what can i use to get the count of the keyword.

sandeep · February 15, 2014 at 5:48 pm

Hello, I am new to R.

So i am using tm Package and i need to get the count of only few specific words so what can i use to get the count of the keyword.

thanks in advance

Kathrin · December 9, 2015 at 12:42 pm

Hi, is it possible to look for a list of specific strings (a column in Excel or a text file) in the posts (text)?

What I’m trying to do is following:

I have a file with a number of texts

and another one with values I’m interested in. Now I want R to go ahed and look for these values in the text and if they are present in one of the texts, assign this value to the text.

Damien · January 5, 2016 at 9:37 pm

Thanks for this walkthrough. I was able to install R, Rstudio and get posts, corpus, tdm, count and mydata.df to be created. I expected count or mydata to contain 2 columns, one of words and one of the frequency that word occurred in total. Instead, there’s a column for every row in my CSV and a ‘1’ if that word occurred in that column. Great, but need the sum across all csv rows per word. In the Count table, the count column that seems to want to do this, but it is accurate for the first 3 results, but he rest are = 0. Going to play around with this, as of 30 minutes ago I have never seen R. Thanks again!

Damien · January 5, 2016 at 9:43 pm

nevermind. it’s working now.