This is a very basic how to, I’ve just saved here this here for future reference

I wanted to build game that had a life of it’s own. To do this I wanted to get lots of lists of things. A list of emotions, list of names, etc etc. If I have a look around I might be able to find lists of these things, but since there were so many different categories of things I wanted lists of I decided to scrape it from Wikipedia, well dbpedia. I did this in R because it’s what I know.

First I wanted to store the list in a database, I went with MySQL because I have mamp on my machine making it easy to work with. The RMySQL package is easy to install on OS X, I’ve heard people say there isn’t a binary on Windows so you may have to compile it yourself. Anyway, for me it was a case of:

install.packages(“RMySQL”)

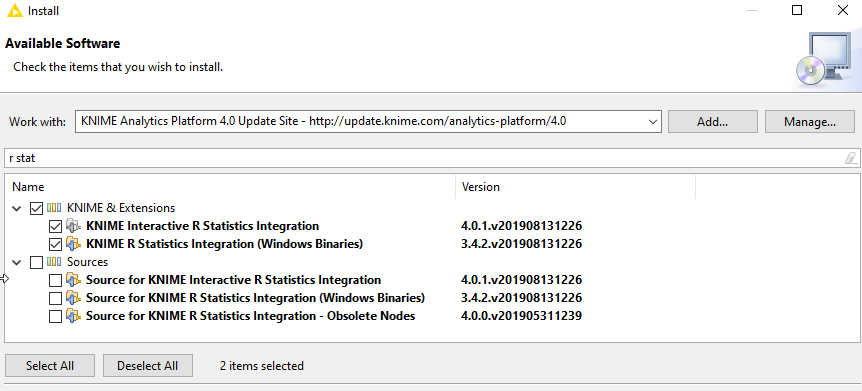

I’m using dbpedia so I installed the sparql plugin

install.packages(‘SPARQL’)

Now load the packages:

library(SPARQL)

library(RMySQL)

Define the endpoint as dbpedia’s and set options as NULL

endpoint <- “http://live.dbpedia.org/sparql”

options <- NULL

Even though I’m not using them all I’m stealing the sparql prefixes that the dbpedia endpoint uses

prefix <- “PREFIX owl: <http://www.w3.org/2002/07/owl#>

PREFIX xsd: <http://www.w3.org/2001/XMLSchema#>

PREFIX rdfs: <http://www.w3.org/2000/01/rdf-schema#>

PREFIX rdf: <http://www.w3.org/1999/02/22-rdf-syntax-ns#>

PREFIX foaf: <http://xmlns.com/foaf/0.1/>

PREFIX dc: <http://purl.org/dc/elements/1.1/>

PREFIX : <http://dbpedia.org/resource/>

PREFIX dbpedia2: <http://dbpedia.org/property/>

PREFIX dbpedia: <http://dbpedia.org/>

“

I then wrote a pretty straight forward query to grab all the English labels to things in the emotion category of wikipedia and put this in to my query, along with the prefixes.

query <- paste(prefix,

‘SELECT ?isValueOf ?name

WHERE {

?isValueOf <http://purl.org/dc/terms/subject> <http://dbpedia.org/resource/Category:Emotions> .

?isValueOf rdfs:label ?name

FILTER(LANG(?name) = “” || LANGMATCHES(LANG(?name), “en”))

}’)

Finally

result <- SPARQL(endpoint,q,ns=prefix,extra=options)$results

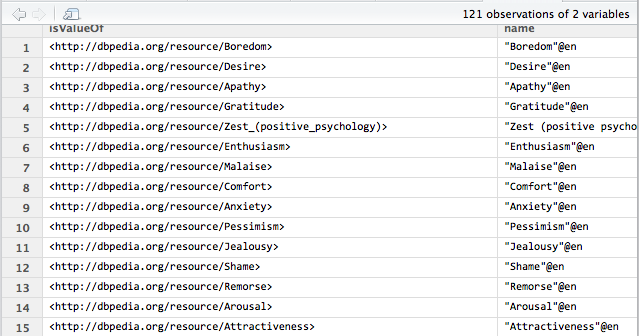

gets me the list of emotions in English in a table like so:

Now for my game I want these in database. Later I’m going to want more information on them, I can grab that from info Wikipedia later, but that can wait until I’m more certain that my game will work. I set up a database called classroom_story (the name of my game) and created a table to store my emotions. Because I’m using MAMP I had to create a symbolic link like so:

ln -s /Applications/MAMP/tmp/mysql.sock /tmp/mysql.sock

The results can then be written to the mysql table very easily:

con <- dbConnect(MySQL(), user=”username”, password=”password”, dbname=”classroom_game”, host=”localhost”,client.flag=CLIENT_MULTI_STATEMENTS)

# write it back

dbWriteTable(con,”emotions”,result,overwrite=T)

dbDisconnect(con)

Currently this is just a list, but I could pull back whatever I want from Wikipedia, I’m going to want to pull out lots of information about lots of things to build my game.

0 Comments