I’m currently doing a PhD at the University of Bolton; part of the process is fill in what the University calls Mansubs. Mansubs are basically there to make you think about what it is you are doing and to give the University some confidence you can do it. I’ve recently just finished a Mansub called the R1. I don’t know what R1 stands for, but it sets the scene for what I am going to do. The R1 goes to a panel and before it is accepted you usually have to make some changes.

I’ve submitted mine and it will come back with a request for changes. I’m not sure if I am supposed to publish my form online, but I feel it goes against my work if I don’t. So here the main chunk of the form in, this version is before I have made any of the changes asked of me:

Give an account of the proposed plan of work for the research to be undertaken for the MPhil and/or PhD. Include its relationship to previous work, with references, and its intended outcomes (between 1000 and 1500 words). Please include word count.

1. Aims and Objectives

The intended aim and outcome of this work is to investigate and develop processes or tools that will pair users of technology with statistical, data mining and modelling tools. This intervention will make it possible to address the question, “Can we harness the playfulness of technology to encourage users to reflect on their methods of practice?”

In order to reach this outcome I have identified three domains that require further exploration. Objectives have been created to understand these domains, and the design of any intervention will be based upon findings. The domains and related objectives are:

1) Technology: Survey the creation of technical footprints that can be analysed, and identify the methods available for users to analyse them

2) Play: Establish the relationship of play in the tool or framework. Can play be used to create an environment where users can experiment and learn from their footprint?

3) Politics and Ethics: Establish how organisations influence and control users of technology and if the tool can education users about these issues and what the relationship is between enslavement and emancipation in technological play.

Findings from these aims will drive both the design and to decide the data to be used in the evaluation of the intervention. Realistic evaluation methods put forward by Pawson and Tilly (1997) will be used to evaluate the intervention.

2. Context of Research

Technology captures data about its users (Kleinberg 2007), whose interactions with technology leave behind ‘footprints’ that can be analysed to obtain an understanding of the user. For example, we can find out what they are interested in, the people that they interact with and the tools they use to complete tasks. These footprints are already being analysed by organisations for their own purposes. A familiar example of a footprint is the data generated by online shoppers, which includes many statistics such as past purchases, sites visited, ratings of products, shopper sex and age. This provides the market with information about the customer that can be used to create an environment tailored for them (Rowley 2005). A positive-feedback loop is generated where benefits such as a discount on the products they may purchase results in more coupons for similar products in the same shop which in turn results in more purchases of the product, together with a richer data set relating to the individual customer. While supermarkets may be using these footprints to control markets government agencies such as the NSA or GCHQ using similar technologies in an attempt to control societies (Greenwald et al, 2013).

In parallel to this explosion of captured and analysed information, there has been an increase in the uptake and usability of data analysis tools that allow for their functionality to be adapted and extended (Rexer 2013). This could involve extension of a tool to incorporate new or experimental statistical methods, different plotting methods or allowing data input from new/experimental data formats. The rapid growth of uptake of open source tools such as R means that it is becoming common practice for developers of statistical methods to release their methods in a format which these open tools can import.

The use of technologies is often playful (Goldstein 2013), and entertainment or a social experience is often the driving force behind technological innovation. The playful elements of technology can also be seen as exploiting the user by leading them to create a certain type of footprint, for example by providing rewards for giving up information, or giving a certain type of information. Users of technology often find themselves in a game like environment where they are manipulated in to actions by receiving positive feedback from the game. This can involve manipulating them into exposing up details of friends or feedback of experience. These are sometimes referred to as ‘viral mechanisms’ (Meidell 2010) and the products that employ these mechanisms as ‘exploitationware’ (Bogost 2011).

In an education setting there are examples of information mechanisms becoming a positive-feedback loop, in which students find themselves trapped in a game where the feedback mechanism itself has an effect on the outcome. An example being students at the University of Kingston being told that low scores on the student satisfaction survey will affect the perceived quality of the University (Coughlan 2008). The students are placed in a position where their future prospects are harmed if they give anything other than positive feedback; this in turn encourages other students to join the University, who will also have play this game with the University feedback system.

Footprints and the ability to analyse them has roots in the field of Learning Analytics, a field that explores analytics across educational institutions. Powell and MacNeil (2012) in the Cetis Analytics Series identify one of many stakeholders as the individual learner themselves giving them the ability to reflect on their achievements and patterns of behaviour in relation to others.

2 Phases of work

2.1. Domains and associated objectives

2.1.1 Technology

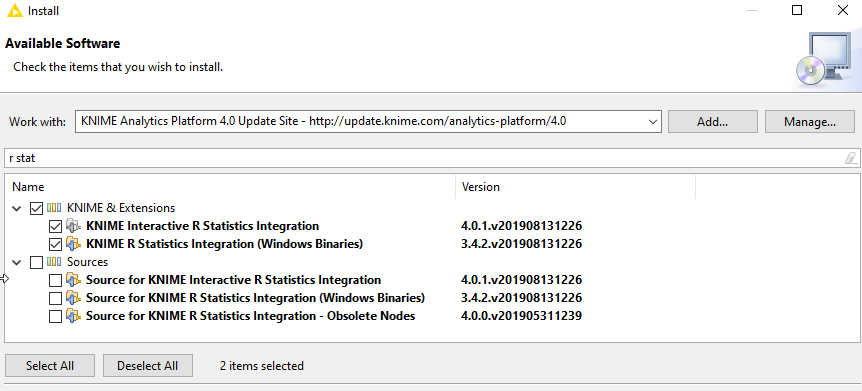

The tools and processes available to stakeholders in Learning Analytics been evaluated (Kraan, Sherlock) by the candidate, but it was found that often the processes are out of the reach of learners because their data is unavailable or the usability of the tool is not within their skillset. To test the possibility of bringing user data and statistical methods together the candidate developed a series of R scripts (Sherlock 2013). These scripts scraped content at URLs identified as relevant to a user and used statistical methods to generate ‘Topic’s’ that it identified as relevant to the user. These scripts were used as a foundation for work within the European funded project, TRAILER, where a graphical web based interface was added (Sharples 2013).

While the scripts and resulting EU work showed that it is technically possible to create such a tool it raised questions around its technical limitations since the tool predetermined both the statistical methods and footprints that could be used with it. Further investigation in to this domain expands upon the work undertaken by the candidate within the TRAILER project. The feasibility of expanding the array of available footprints for users and any inherit bias in the footprint identified will be researched. The technical ability to take these footprints and then allow the user to select an appropriate way to analyse them will be investigated.

2.1.2 Play

My PhD work explores play in two ways. Firstly it positions the user in an environment in which they can explore, in a context similar to instructional scaffolding (Sawyer, 2006). Secondly it investigates how play is being used to manipulate the user in to creating specific data or performing certain tasks. The ethics of big data are explored and the possibility empowering users with these processes move the locus of control from organisations to the user(Bogost 2011).

Play related questions are raised around the fundamental importance of play in exploring our environment, should the system use theory on play encourage the user to explore their footprint? If so how do we deal with the tension between using play as a mechanism to model and explore an environment and play as ‘explotationware’?

As part of work for the European funded project ‘Omelette’ I have been able to explore play by creating playful web based widgets that encourage users to share data in real time about their experiences. This was rolled out at a series of group events to encourage people to generate a footprint that can be analysed in real time by people at the event. A series of visual representations on the data gave instant feedback to the group. A review of research in to experiment/learning through play and evaluation of trials ran in the Omelette project are foundations of this segment of work. Further work includes research in to theories of play as a method of exploration and analysis of feedback from events where these web widgets have been deployed.

2.1.3 Politics and Ethics

Politics and ethics research questions overlap the other two domains and research will be done in parallel with them. Issues of ownership, privacy, bias and control surround the concepts of footprints and any bias they contain. Examples of play being used to influence users fuels the research question: “What is the relationship between enslavement and emancipation in technological play?”

2.2 Design and Development of intended outcome

The design of any intervention depends on the outcomes of these aims. Data on the effectiveness of any intervention deployed by the work can be gathered from users of the tool, via questioning the users, analysis of logs or changes to the users footprint before and after using the tool. There is a danger while gathering and analysing this data that the intervention falls foul of the data and play as a control mechanism; to avoid this findings from research in to the areas of play and ethics should steer the development of an intervention. Realistic evaluation methods put forward by Pawson and Tilly (1997) will be used to evaluate the intervention.

Wordcount (1499)

References

Ball J, Borger J, and Greenwald G. (2013) Revealed: how US and UK spy agencies defeat internet privacy and security. The Guardian.

Bogost, I. (2011) Persuasive Games: Exploitationware, Gamasutra

Coughlan, S. (2007). University staff faking survey. BBC News. Available at: http://news.bbc.co.uk/1/hi/education/7397979.stm. Date accessed: 01 Mar. 2014.

Goldstein, J. (2013) Technology and play. Scholarpedia 8.2:30434.

Rowley, J. (2005) Building brand webs: Customer relationship management through the Tesco Clubcard loyalty scheme, International Journal of Retail & Distribution Management, Vol. 33 Iss: 3, pp.194 – 206

Kleinberg J. (2007). Challenges in mining social network data: processes, privacy, and paradoxes. Proceedings of the 13th ACM SIGKDD international conference on Knowledge discovery and data mining.

Kraan, W and Sherlock, D. (2013). Institutional Readiness for Analytics A Briefing Paper. CETIS Analytics Series. Cetis Publications.

Literat, I. (2012) The Work of Art in the Age of Mediated Participation: Crowdsourced Art and Collective Creativity. International Journal of Communication, [S.l.],ISSN 1932-8036.

Meidell, B. (2010) What I Learned From FarmVille, So You Don’t Have To Play It,

blog post on http://meidell.dk last accessed February 7th 2013

Powell, Stephen, and Shiela MacNeil. (2012) Instituitional Readiness for Analytics A Briefing Paper. CETIS Analytics Series.

R Core Team (2013). R: A language and environment for statistical computing. R

Foundation for Statistical Computing, Vienna, Austria. URL

Sawyer, R. Keith. (2006). The Cambridge Handbook of the Learning Sciences. New York: Cambridge University Press.

Sharples, Paul . Data Analytics, (2013 ) GitHub Repository: https://github.com/ps3com/data-analytics. Date accessed: 01 Mar. 2014.

Sherlock, David. Personal Corpus, (2013 ) GitHub Repository: https://github.com/ds10/Personal-Corpus. Date accessed: 01 Mar. 2014.

0 Comments